What llama.cpp's Pace Tells You About On-Prem LLM Readiness

I deployed a quantized model on a client’s on-prem GPU last month. Set up the server, pointed the app at it, ran inference. It worked. First try.

That sentence would have been fiction two years ago. Back then, llama.cpp was a single-GPU experiment. Multi-GPU was duct tape. The server mode was something you demo’d, not something you put behind a load balancer.

Now I’m writing this because most teams I talk to are still treating self-hosted inference as “interesting but not production-ready.” The tooling passed them while they were waiting for permission.

What Will Still Bite You

I want to lead with this because the hype crowd won’t.

GPU procurement is a capital expenditure conversation, not a software download. If you don’t already have data center capacity or cloud-GPU access, the blocker isn’t the software. It’s a procurement cycle that takes months.

Ops expertise is the quieter problem. Running llama.cpp in production means someone on your team owns model updates, hardware failures, quantization tradeoffs, and latency tuning. That’s a different job than calling an API. I’ve seen teams spin up on-prem inference, celebrate for a week, then watch it rot because nobody owned it. Six months later they’re back on the API, having spent the budget anyway.

Model selection is real work. The quantized model that runs great for summarization falls apart on code generation. There is no default. Every use case needs evaluation, and evaluation takes time nobody budgets for.

These are solvable. But teams that skip this section end up with on-prem deployments that nobody trusts.

What Changed While You Were Waiting

A year ago, I would have told you to wait. Not anymore.

Tensor parallelism landed properly. You split inference across GPUs without patching anything yourself. The server mode matured into something that actually handles concurrent requests behind a load balancer. 1-bit quantization arrived, which means models that needed high-end hardware now run on modest configs without catastrophic quality loss.

CUDA optimizations ship continuously. The quantization tooling got a proper refactor. Multi-modal support is in and actively maintained.

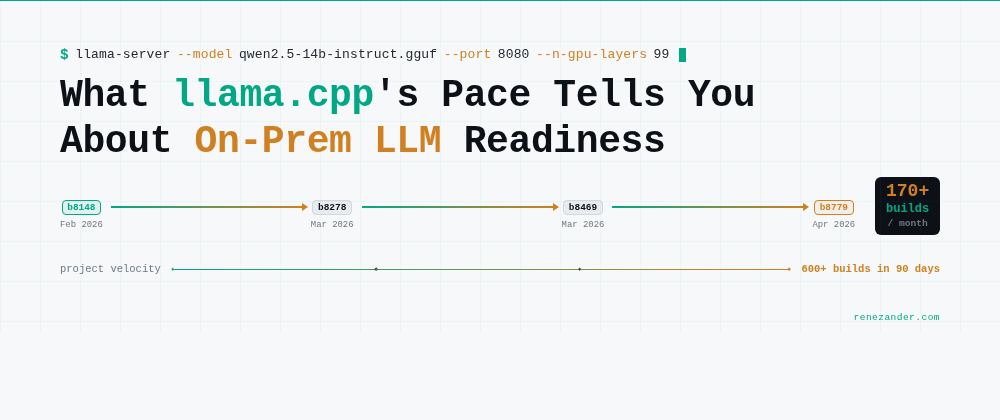

The project ships multiple builds per day through automated CI. Over the last three months, roughly 600 releases went out. That’s not a changelog. That’s a manufacturing line. When a project has that kind of contributor depth and release cadence, “not production-ready” needs a much more specific justification than it used to.

llama.cpp crossed 100K GitHub stars this year. PyTorch took seven years to get there. TensorFlow took eight. Not a popularity contest. A signal about how many engineers depend on it in production.

The Decision Framework

Here’s what I’ve seen actually work:

On-prem makes sense when:

- Your data cannot leave the building. Regulated industries, sensitive IP, legal constraints.

- Inference volume is high enough that API costs became a material line item.

- You need predictable latency without network variability.

- You have an engineer who wants to own the stack.

API is still better when:

- You’re prototyping or usage is unpredictable.

- You need frontier models not available as open weights.

- Nobody on your team can own the ops burden.

- Your compliance requirements don’t actually mandate on-prem. Many teams assume they do when they don’t.

The mistake I see most often: treating this as a permanent binary. Start with API. Move workloads to on-prem when economics or data constraints force the conversation. The infrastructure to do it exists now. It didn’t two years ago.

The Real Question

The software is ready. The open-weight models are good enough. The tooling is mature.

The question isn’t technical anymore. It’s organizational. Do you have someone who will own this? Does your procurement process move fast enough? Is your compliance team making decisions based on current capabilities, or based on what was true in 2023?

The infrastructure is ready. The question is whether your org chart is.