AI Decision Support Platform for Enterprise Operations

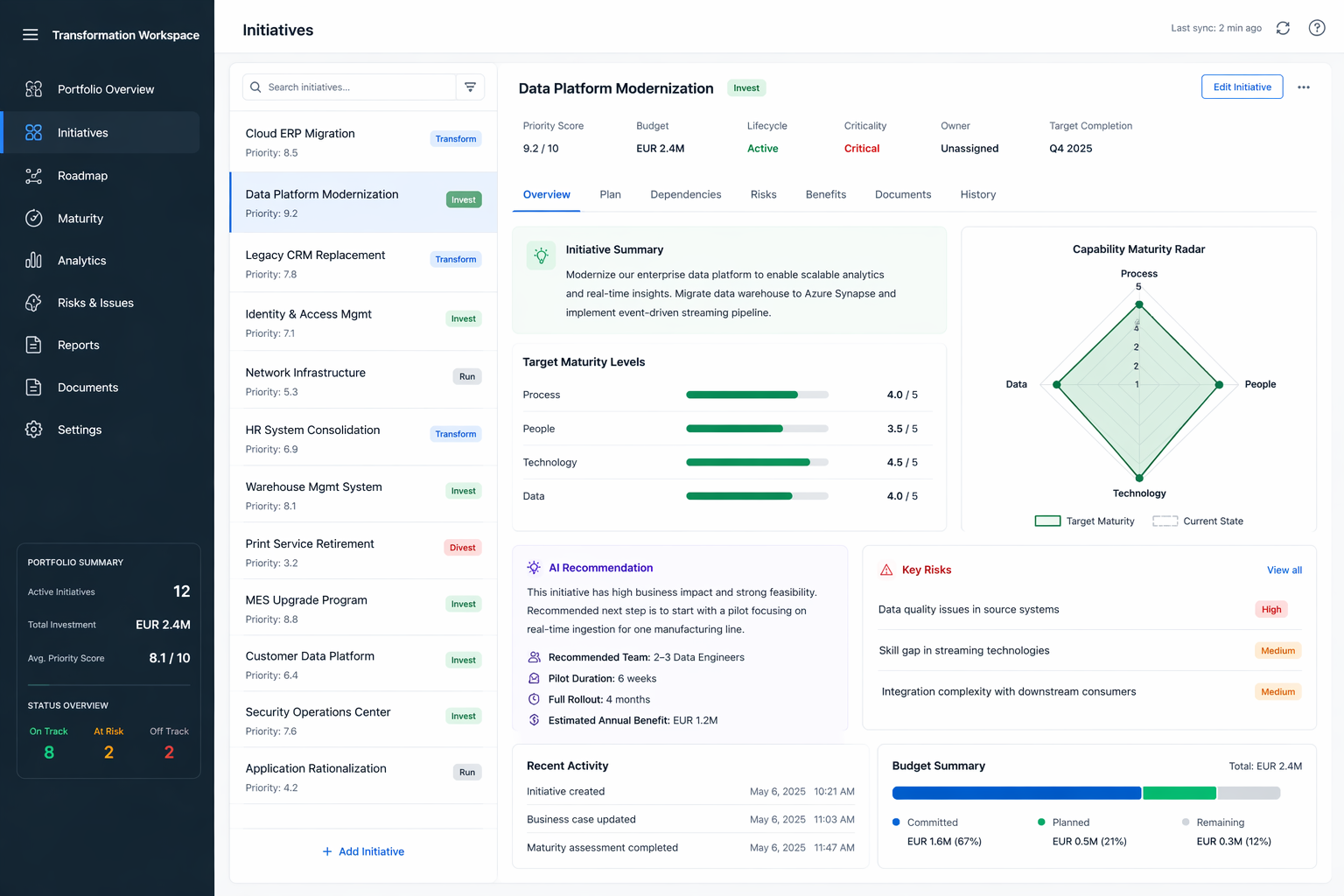

Eine Transformations-Portfolio-Schicht, die jede Initiative nach Geschäftsnutzen, technischer Komplexität, Capability-Reife und ROI bewertet — und pro Initiative einen konkreten Nächsten-Schritt-Plan ausgibt. Führungsteams allokieren Budget aus lebenden Prioritäts-Scores, nicht aus Quartals-PowerPoint. A transformation portfolio layer that scores every initiative across business value, technical complexity, capability maturity, and ROI — and produces a concrete next-step plan per initiative. Leadership allocates budget from live priority scores, not quarterly PowerPoint.

This platform runs like infrastructure, not a chatbot. Every initiative score persists across sessions (memory), every budget allocation is human-gated (approvals), writes back to the EAM catalog are scoped and isolated (sandboxing), and every reprioritization is reversible and logged (rollback).

The problem

Enterprise transformation portfolios run on PowerPoint and spreadsheets. Steering committees meet quarterly, publish a priority list of forty to a hundred initiatives, and the list is stale the day it is finalised. When a new ERP rollout, acquisition, or compliance deadline lands — it does every quarter — the plan falls out of sync within a month.

Two symptoms follow. First, budget follows the loudest stakeholder, not the highest-value initiative. Second, every re-planning cycle is another offsite with the same fifteen people arguing from the same four slides. The cost is not just wasted offsites; it is months of delay on initiatives that would have cleared their ROI threshold the day they were scoped.

Enterprise Architecture Management tools — LeanIX, ServiceNow APM, Ardoq — solve catalog: here are the 120 applications and 75 capabilities you own, with current lifecycles. They do not solve decision: which three initiatives, in which order, for what budget, against which capability-maturity gap.

The solution

A scoring-first decision layer sitting on top of the existing EAM stack. Four things happen on every initiative:

Composite priority score across four dimensions:

- Business value (revenue / cost / compliance / strategic impact, normalised per initiative type)

- Technical complexity (architecture fit + integration surface + team familiarity)

- Capability maturity gap (target minus current, per Process / People / Technology / Data)

- Investment ROI window (pilot cost + rollout cost vs. annual benefit, time-discounted)

Capability maturity radar per initiative with target vs. current on four axes — Process, People, Technology, Data. No more mystery-meat maturity decks; the same numbers that drove the score are visible to anyone who opens the initiative.

AI recommendation engine reads initiative metadata, capability assessments, risk register, and recent activity, then emits a concrete next-step plan: team size, pilot scope, pilot duration, full-rollout duration, estimated annual benefit. Not a score — a plan. Example on-screen: “Start with a pilot focusing on real-time ingestion for one manufacturing line. 2–3 Data Engineers. 6-week pilot. 4-month full rollout. EUR 1.2M estimated annual benefit.”

Live portfolio view — budget summary (committed / planned / remaining), status distribution (on track / at risk / off track), total investment, average priority score. The numbers update the instant an initiative’s metadata changes.

The reasoning trace is always visible. Every score comes with its four-dimensional breakdown; every AI recommendation comes with the evidence — initiative description, capability gap, risk register, team availability — it was conditioned on. No black-box prioritization.

The results

Metrics drawn directly from the platform’s live view of a twelve-initiative transformation portfolio:

| Signal | Before (spreadsheet) | With the platform |

|---|---|---|

| Repriorization cadence | Quarterly offsite | Every metadata change |

| Evidence behind a priority | Slide-deck argument | 4-dimensional numeric trace |

| New-initiative scoping time | 4–6 weeks workshop | Minutes (AI-proposed, human-overridden) |

| Budget status visibility | Month-end finance report | Live (committed / planned / free) |

Portfolio-level outcomes that fall out of this:

- Budget allocation reflects evidence, not volume. The “Data Platform Modernization” initiative scores 9.2/10 against a clearly-quantified rubric; the “Print Service Retirement” initiative scores 3.2/10 against the same rubric. The steering committee’s argument moves from “should we do this?” to “is 9.2 still right given last week’s risk register update?”

- AI recommendations replace kickoff theatre. A new initiative no longer needs a six-week scoping workshop. The platform proposes the team size, pilot scope, duration, and expected benefit. The humans override where they disagree, accept where they do not.

- Live reprioritization. When a risk register update lands at 14:00, the affected initiative’s priority score is re-computed by 14:01. No quarterly-offsite lag.

Positioning

This is not a replacement for LeanIX, ServiceNow APM, or Ardoq. Those tools own the capability catalog and the application lifecycle — and they are better at it than anything we would build. This platform is the decision layer that sits on top: it reads from the catalog, adds scoring and AI-recommended plans, and writes back status updates.

If your EAM tool already does this, you do not need it. If you are still running transformation priorities out of Excel — as most DACH mid-market enterprises I speak to are — the gap this closes is the gap between a catalog and a decision.

Stack & links

- Scoring engine: weighted composite across 4 dimensions, tuneable per portfolio

- Capability maturity model: target vs. current on 4 axes (Process / People / Technology / Data)

- AI recommendation layer: team size, pilot scope, pilot duration, rollout duration, annual benefit

- Dashboards: portfolio overview, initiative detail, roadmap, risks, budget, analytics

- Integrations: read-only from EAM catalogs (LeanIX, ServiceNow APM, Ardoq), write-back via API

- Deployment: on-prem or private-cloud — no initiative data leaves the client landscape

If you are responsible for a DACH transformation portfolio and you suspect your priorities are set by volume rather than evidence, I am happy to walk through your specific portfolio. No slide deck, thirty minutes, you bring the top ten initiatives.

Stack Stack

- Composite scoring engine (4 weighted dimensions, tuneable per portfolio)

- Capability maturity model (Process / People / Technology / Data)

- AI recommendation layer (team size, pilot scope, rollout plan, annual benefit)

- Portfolio dashboards (initiatives, roadmap, risks, budget, analytics)

- Read/write integration with EAM catalog (LeanIX, ServiceNow APM, Ardoq)

Bereit, ein ähnliches Projekt zu skizzieren? Schriftliches Konzept in 24 Stunden. Ready to scope a similar engagement? Written concept in 24h.

Mein Konzept in 24h → My concept in 24h →